7 Best Crypto Trading Simulators for Strategy Testing

Picture this: you've got $5,000 burning a hole in your pocket, and Bitcoin just made another wild swing. Do you jump in, or do you wish you could test your strategy first without risking real money? That's where crypto trading simulators come in, offering a risk-free environment to practice trading techniques, refine your approach, and build confidence before putting actual capital on the line. This guide explores the best crypto trading simulators available today, showing you how paper trading platforms and demo accounts can transform beginners into informed traders while helping experienced investors backtest strategies and experiment with new trading methods.

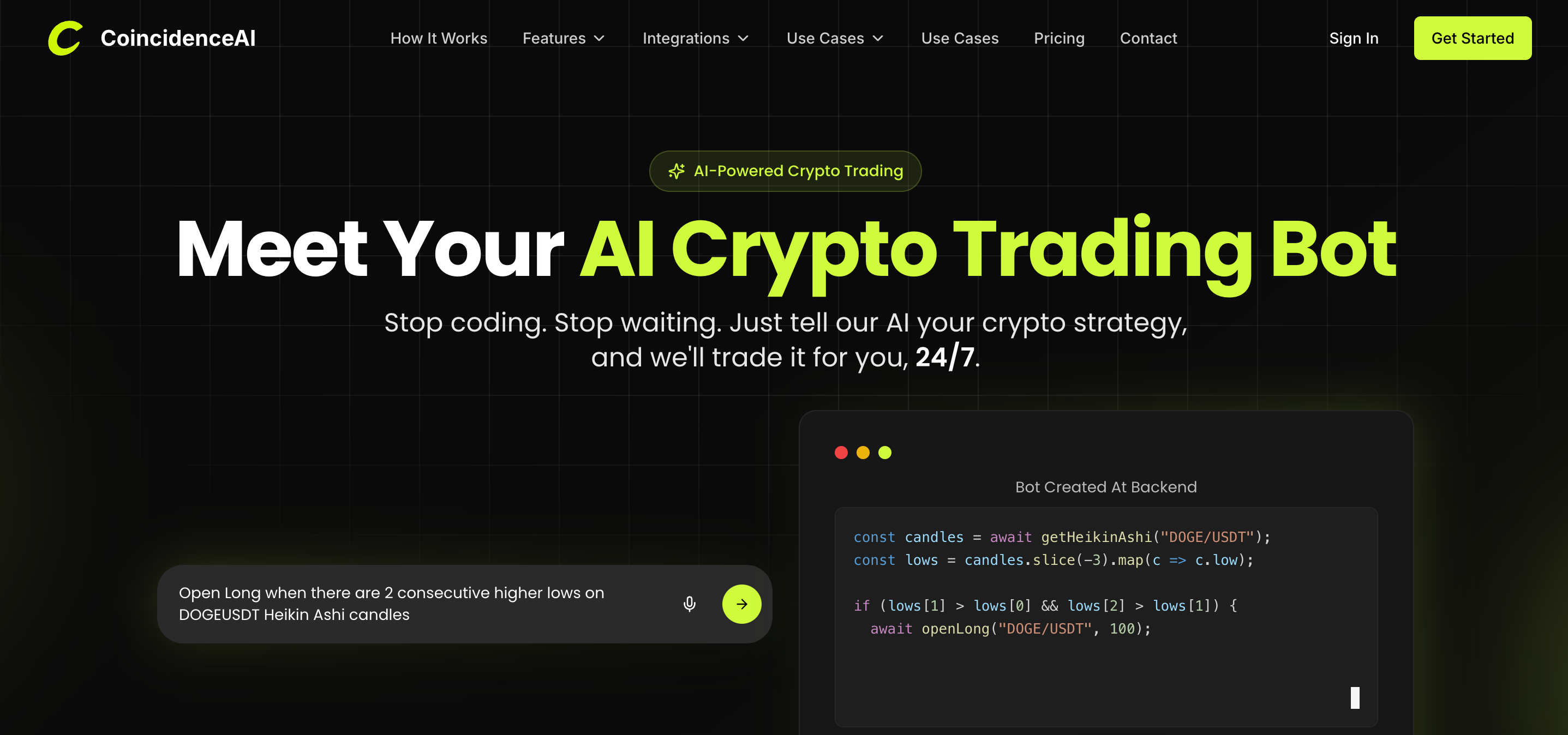

Coincidence’s AI crypto trading bot takes your preparation further by combining simulation capabilities with intelligent automation. Their AI crypto trading bot learns from market patterns and executes trades based on data-driven signals, giving you a practical tool that bridges the gap between practice and live trading.

Summary

- Most crypto traders lose money testing strategies in live markets because they skip systematic validation. Research analyzing over 66,000 individual investors found that the most active traders, those learning through frequent market participation, earned 11.4 percent annually compared to 17.9 percent for market benchmarks.

- Trading simulators that only enable manual practice replicate the same learning curve as live trading without the financial damage. Testing strategies one trade at a time accumulates anecdotes instead of evidence.

- Historical backtesting separates effective strategy validation from simulated gambling. Bitcoin experienced drawdowns greater than 20 percent more than 20 times between 2015 and 2022, according to Fidelity Digital Assets research. Strategies tested only during recent months completely avoid these regime changes, creating stability illusions that evaporate when volatility returns or market structure shifts.

- Most simulators assume traders arrive with fully defined strategies ready to test, but strategy creation is harder than execution. Professional quant funds evaluate strategies across thousands of historical trades during backtesting to determine statistical significance before deployment.

- Execution costs destroy more strategies than poor entry signals when simulators ignore market reality. A scalping approach generating 0.3 percent per trade in backtesting becomes unprofitable once you incorporate 0.1 percent trading fees and 0.05 percent average slippage.

AI crypto trading bot addresses this by converting plain English strategy descriptions into structured backtests that run across historical crypto market data in seconds, then deploying validated strategies as automated bots across exchanges like Bybit and KuCoin.

Why Most New Crypto Traders Lose Money Testing Strategies

New crypto traders lose money testing strategies because they treat live markets as their laboratory. Every untested hypothesis costs real capital, and without systematic validation, those costs accumulate faster than learning happens. The result isn't just financial loss, it's the erosion of confidence before traders ever determine whether their approach has statistical merit.

The pattern surfaces consistently across trading communities. Someone opens an exchange account, reads about a promising indicator or entry signal, and immediately begins placing trades to "see if it works." Each position becomes a dual-purpose transaction: part experiment, part gamble.

Stop Gambling With Savings

When the trade succeeds, it validates nothing about the strategy's reliability. When it fails, the lesson comes with a price tag attached. This approach inverts the scientific method. Instead of forming a hypothesis, testing it in controlled conditions, and then deploying it with capital, traders skip directly to deployment. They're essentially running A/B tests with their savings account.

The Cost of Learning Through Loss

Academic research quantifies the cost of this experimentation. In their study published in the Journal of Finance, economists Brad Barber and Terrance Odean analyzed trading records from over 66,000 individual investors.

The most active traders, those who tested strategies through frequent market participation, earned an average annual return of 11.4 percent, compared with 17.9 percent for the broader market benchmark. That 6.5 percentage point gap represents the tuition paid for learning what doesn't work.

Seeking Statistical Edges

The study reveals something more troubling than simple underperformance. Active traders weren't just slightly behind the market; they were systematically destroying value through strategies that lacked consistent statistical edges. Each trade felt like progress, like gathering experience, but the aggregate outcome showed they were paying to discover that their methods didn't hold up across different market conditions.

The Deceptive Nature of Volatility in Crypto Trading

Crypto markets amplify this dynamic. Volatility creates short-term wins that feel like validation. A trader tests a breakout strategy, catches one successful move, and assumes they've found an edge. Meanwhile, the same approach fails across dozens of other setups, but those losses blend into the noise of a volatile portfolio. The illusion of competence builds while capital quietly drains away.

When Intuition Replaces Validation

The misconception starts with how learning feels. Direct market participation seems like the fastest path to understanding because it's visceral and immediate. Prices move, emotions spike, decisions get made under pressure. That intensity creates the sensation of rapid skill development. But intensity isn't the same as effectiveness.

A trader who places fifty trades in a month without systematic validation learns fifty isolated lessons, not a coherent strategy. They discovered that momentum worked on Tuesday, mean reversion worked on Thursday, and both failed on Friday. Without a framework for testing whether these patterns repeat reliably, each trade is just another data point in an uncontrolled experiment. This is where most trading journeys stall.

Pattern Extraction Struggles

Traders accumulate experiences but struggle to extract patterns. They know what happened, but not why it happened or whether it will happen again. The search for consistency leads them backward, toward tools that should have come first:

- Backtesting platforms

- Paper trading accounts

- Simulation environments

Many traders eventually search for the best crypto trading simulator precisely because they've recognized this gap. They want to test ideas without bleeding capital, to validate strategies under realistic conditions before risking money. The impulse is correct. The timing is just several thousand dollars too late.

The Discipline Gap

Starting small doesn't solve the underlying problem; it just reduces the cost per lesson. A trader who deposits $500 instead of $5,000 still faces the same structural issue: they're testing strategies in an environment where every mistake has financial consequences. The learning curve doesn't compress because the stakes are lower. It just becomes more affordable to stay confused longer.

The advice to "treat your first month as tuition" acknowledges this reality while normalizing it. Yes, early losses are common. But framing them as inevitable obscures the fact that they're avoidable. Traders don't need to pay tuition to the market when simulation tools exist specifically to provide consequence-free testing environments.

Proof Before Capital

What's missing isn't just a practice space. It's the recognition that strategy validation should precede capital deployment, not follow it. Traders need to prove to themselves that an approach works across multiple market conditions, timeframes, and asset pairs before risking real money. Without that proof, they're not trading; they're guessing with consequences.

The Real Problem With Most Crypto Trading Simulators

Most crypto trading simulators let you practice placing trades, but they don't answer whether your strategy has a statistical edge. They replicate the experience of live trading without the financial risk, which sounds useful until you realize you're still learning one trade at a time instead of testing systematically across hundreds of market scenarios.

The critical gap becomes visible when you compare what traders actually need versus what most simulators provide. A trader:

- Opens a paper trading account

- Simulates buying a breakout

- Watch the price move favorably

- Feels a rush of validation

That single successful trade proves nothing about whether the approach works reliably. It might reflect genuine pattern recognition, or it might just be Tuesday's volatility creating the illusion of competence.

Why Individual Trades Don't Build Knowledge

When you test strategies through isolated trades, you're collecting anecdotes instead of evidence. Each simulated position tells you what happened in one specific market condition, during one timeframe, with one asset. You learn that momentum worked when Bitcoin broke resistance at 3 PM, but you don't learn whether momentum strategies generate consistent returns across different:

- Volatility regimes

- Market structures

- Timeframes

The same frustration surfaces repeatedly in trading communities. Someone:

- Test a strategy for two weeks

- Catches a few profitable setups

- Deploys real capital only to watch it fail across the next month

The Importance of Adapting to Shifting Market Conditions

The strategy didn't change. The market conditions shifted, and the trader never validated whether their approach held up:

- When volatility compressed

- When correlations reversed

- When liquidity dried up during off-peak hours

The Illusion of Mastery

Paper trading often replicates the same learning curve as live trading, just without the financial damage. You're still experimenting one decision at a time, accumulating experiences that don't aggregate into systematic understanding. A profitable paper trade feels validating at the moment, but it teaches you nothing about:

- Drawdown patterns

- Win rate consistency

- How the strategy behaves when the market structure fundamentally changes.

The Historical Testing Problem

What separates effective strategy validation from simulated gambling is the ability to test across time periods you didn't personally experience. Historical backtesting lets you run a strategy against years of past price data, measuring how it would have performed during:

- 2021 bull run

- 2022 bear market

- Sideways chop of 2023

This isn't about predicting the future. It's about determining whether a pattern repeats reliably enough to justify risking capital.

You can simulate buying Ethereum right now, but you can't test whether that same entry signal would have generated positive returns across the last three years of market cycles. Without historical validation, you're trusting that recent market behavior will continue indefinitely, which is a bet, not a strategy.

Challenges of Data Limitations in Crypto Trading

The data constraints in crypto make this even more critical. Unlike traditional markets, where traders can backtest across decades of price history, crypto offers roughly a decade of usable data for major assets and far less for newer tokens. That limited dataset makes it difficult to distinguish between genuine statistical edges and patterns that worked temporarily during specific market regimes. A trader who validates their approach across only six months of recent data might be optimizing for conditions that won't repeat as institutional participation changes market dynamics.

Traders seeking validation face an uncomfortable reality. Testing across multiple coins and timeframes provides some evidence of generalization, but insufficient historical depth means you're always working with incomplete information. The strategy that worked across ten altcoins during the last bull market might depend on catching momentum moves that won't repeat with the same magnitude as markets mature and volatility compresses.

What Systematic Testing Actually Requires

The value of a trading simulator isn't in making you comfortable with order types or interface navigation. It's in letting you measure performance metrics that matter before capital deployment.

- Does the strategy maintain positive expectancy across different market conditions?

- What's the maximum drawdown you should expect?

- How does performance change when you incorporate realistic trading fees and slippage?

The Transformation From Pattern Recognition to Evidence-Based Systematic Validation

These questions can't be answered through manual paper trading. They require running the strategy across hundreds or thousands of historical setups, measuring consistency, and stress-testing assumptions. You need to see how the approach performs during:

- Bull markets

- Bear markets

- Long sideways periods where most strategies bleed slowly through fees and whipsaw losses.

Platforms that enable this kind of testing bridge the gap between simulation and systematic validation. They let traders move from "this worked today" to "this worked across 500 setups spanning multiple market regimes, with realistic costs incorporated and drawdown patterns measured." That shift transforms trading from pattern-recognition gambling into evidence-based decision-making.

The Strategic Transition From System Validation to Automated Execution

Once traders validate a strategy through systematic testing, the natural progression isn't more manual execution. It's automation. Solutions like Coincidence AI let traders deploy validated strategies as AI-powered bots that execute across multiple exchanges without requiring constant manual intervention.

The simulator becomes the proving ground where strategies earn the right to run autonomously, and automation becomes the tool that executes those proven approaches with consistency impossible to maintain through manual trading.

The Measurement Gap

Most simulators track portfolio balance and individual trade results, which creates the illusion of comprehensive performance tracking. But those metrics don't reveal the information traders actually need.

A simulated portfolio that grows 15% over two months tells you nothing about whether that growth came from consistent edge execution or from catching two lucky momentum trades that masked dozens of small losses. Meaningful performance measurement requires breaking down results by:

- Market condition

- Timeframe

- Setup type

Decoding Market Performance

- You need to know whether the strategy performs better during high- or low-volatility periods.

- You need to see how win rates change when trading during Asian market hours versus US market hours.

- You need to measure whether the approach generates returns through frequent small wins or infrequent large wins, because those patterns require completely different risk management approaches.

The Critical Distinction Between Superficial Practice and Strategic Validation

Without this granular analysis, traders optimize for the wrong metrics:

- They chase higher win rates without realizing their losing trades are twice as large as their winners.

- They celebrate monthly returns without measuring the drawdown required to achieve them.

- They assume recent performance will continue without testing whether the strategy's edge persists across different volatility regimes.

The simulators that actually prepare traders for live markets don't just track what happened. They measure why it happened, under what conditions it's likely to repeat, and what risks accompany the approach. That's the difference between practice and validation.

Related Reading

- Crypto Trading Tips

- Crypto Backtesting

- Dca Bot Vs Grid Bot

- What Is Wash Trading

- Automated Trading Over Manual Trading

- What Is Long And Short In Crypto Trading

- What Is Swing Trading Crypto

- How Does Crypto Leverage Trading Work

- Forex Crypto Trading

What Makes the Best Crypto Trading Simulator

The best crypto trading simulator transforms strategy validation from guesswork into a measurable process. It provides historical backtesting across multiple market cycles, realistic execution conditions including slippage and fees, and performance analytics that reveal not just whether a strategy made money, but why it worked and under what conditions it's likely to fail.

Execution vs. Expectancy

Most traders discover this distinction too late. They spend weeks practicing manual entries in a paper trading account, building confidence through repetition, only to realize they've validated nothing about statistical reliability. The simulator felt productive because trades executed smoothly, and the interface became familiar.

But comfort with execution mechanics tells you nothing about whether your entry signals generate positive expectancy across different volatility regimes.

Historical Backtesting

Strong simulators leverage strategies based on years of market data, not just recent price action. Testing a breakout strategy across Bitcoin's history means encountering the 2017 parabolic rally, the 2018 bear market collapse, the 2020 pandemic volatility spike, and the 2021 institutional adoption phase. Each period presented radically different market structures:

- Trending versus choppy

- High volume versus thin liquidity

- Correlated moves versus isolated price action

According to Fidelity Digital Assets, Bitcoin experienced drawdowns greater than 20% more than 20 times between 2015 and 2022. A strategy tested only during the last six months might completely avoid these drawdown periods, creating the illusion of stability that evaporates the moment volatility returns.

The Necessity of Testing Across Historical Market Regimes and Maturing Cycles

Historical testing forces strategies to prove they can survive regime changes, not just capitalize on recent patterns.

This becomes critical when you consider how crypto markets mature. Early adopter behavior differs fundamentally from institutional participation. Retail-driven momentum moves that worked reliably in 2017 may not repeat with the same magnitude as market depth increases and volatility compresses. Without testing across multiple cycles, you're optimizing for conditions that might already be obsolete.

Realistic Market Conditions

Simulators that ignore the realities of execution produce dangerously optimistic results. Your backtest shows consistent profits, but it assumes every limit order fills at your exact price, every market order executes instantly with zero slippage, and transaction costs don't exist. Then you deploy capital and discover that:

- Fast-moving markets skip past your limit prices

- Market orders fill several basis points worse than expected

- Fees consume 15% of your theoretical edge

The gap between simulated perfection and actual execution destroys more strategies than poor entry signals. A scalping approach that generates 0.3% per trade in backtesting might be completely unprofitable once you incorporate 0.1% trading fees and 0.05% average slippage. The strategy didn't fail because the signals were wrong. It failed because the testing environment didn't reflect how orders actually execute.

Simulating Market Reality

Accurate simulation includes order book dynamics, typical spread costs during your trading hours, and realistic fill assumptions based on order size relative to market depth. These details feel tedious until you realize they're the difference between a strategy that works on paper and one that generates actual returns.

Strategy Experimentation

Effective simulators let you modify variables quickly and measure how changes affect outcomes. Instead of testing whether a moving average crossover works, you test whether it works better with 10-day and 20-day periods versus 15-day and 30-day periods. You measure performance across different:

- Stop-loss distances

- Position sizing rules

- Exit timing approaches

This experimentation reveals which strategy elements actually drive performance. Often, traders discover that the specific indicator settings matter far less than the broader market regime.

The Range-Bound Trap

A momentum strategy might work across any reasonable parameter set during trending markets and fail across all of them during range-bound periods. That insight changes everything about how you deploy the approach. The pattern surfaces constantly in trading communities. Someone optimizes an RSI strategy until backtests look perfect, then watches it fail immediately in live markets.

The problem wasn't insufficient optimization. It was over-optimization of historical noise rather than genuine edge identification. Simulators that enable rapid experimentation help traders distinguish between parameter sets that capture real patterns and those that merely fit past data.

Performance Analytics

Profit and loss numbers tell an incomplete story. A strategy that gained 40% over six months sounds impressive until you discover it experienced a 35% drawdown along the way. Most traders can't psychologically survive watching their account lose a third of its value, even if the strategy eventually recovers.

The Viability Constraint

The risk profile makes the approach unusable regardless of its theoretical returns. Serious simulators surface metrics that reveal how strategies actually behave:

- Maximum drawdown

- Average drawdown duration

- Win rate versus average win size

- Consecutive losing trades

- Risk-adjusted returns through measures like the Sharpe ratio

These statistics answer the questions that matter.

- Can you psychologically tolerate this strategy's losing streaks?

- Does it generate returns efficiently relative to the risk required?

- How long should you expect to wait between profitable periods?

The Dangers of Misguided Trading Objectives

Without granular analysis, traders chase the wrong goals.

- They optimize for higher win rates without realizing their average loss exceeds their average win by enough to make the strategy unprofitable.

- They celebrate monthly returns without measuring whether those gains required accepting unacceptable volatility.

- They assume recent performance predicts future results without testing whether the edge persists across different market conditions.

Automation Capabilities

Once a strategy proves itself through systematic testing, manual execution becomes the bottleneck. You've validated that a specific setup generates positive expectancy across hundreds of historical occurrences, but now you need to monitor markets constantly to catch each new instance. You miss setups during sleep hours, hesitate during emotional moments, and execute inconsistently when discretion overrides rules.

Bridging Logic and Execution

The most advanced simulators bridge directly to automated execution. The same strategy logic that ran through historical testing deploys as a live algorithm that monitors markets, identifies setups, and executes trades without requiring constant manual intervention. This progression transforms validation into deployment:

- Closing the gap between proving a strategy works

- Capturing its edge consistently

Platforms like Coincidence AI represent this evolution from simulation to intelligent automation. Traders validate strategies through systematic testing, then deploy them as AI-powered bots that execute across multiple exchanges with the consistency impossible to maintain manually.

The simulator becomes the proving ground where strategies earn the right to run autonomously, and automation becomes the tool that executes those proven approaches without the emotional interference and execution delays that undermine manual trading.

Related Reading

- What Is OTC Trading Crypto

- What Are Crypto Trading Signals

- Most Profitable Crypto Trading Strategy

- Best App For Crypto Day Trading

- Best Crypto to Day Trade

- Best Crypto Copy Trading Platform

- Best Crypto Trading Tools

- Crypto Futures Trading for Beginners

- Crypto Day Trading Strategies

- Advanced Crypto Trading Strategies

7 Best Crypto Trading Simulators to Practice and Test Strategies

1. Roostoo

Roostoo builds its environment around social trading and chart analysis. The platform provides unlimited virtual funds across more than 100 cryptocurrencies, letting traders practice position sizing and portfolio allocation without capital constraints.

The integration with TradingView charts creates a unified workspace where analysis and execution happen in the same interface. You can apply technical indicators, draw support and resistance levels, and place simulated trades directly from the chart. This removes the friction of switching between analysis tools and trading platforms.

Assessing Skill Through Competitive Trading Context

Trading competitions add a performance measurement layer that manual practice typically lacks. When you compete against other simulated traders in the same market conditions, you gain context about whether your returns reflect genuine skill or simply favorable timing. A 15% gain means something different if the median competitor achieved 20% versus 8% during the same period.

The social features reveal how different approaches perform simultaneously. You're not just testing in isolation. You're observing what works for momentum traders versus mean-reversion traders, scalpers versus swing traders, all operating under identical market conditions. That comparative context accelerates learning beyond what individual practice provides.

2. Gainium

Gainium shifts focus from manual execution to systematic strategy development. The platform provides over $100,000 in simulated capital and supports both spot and futures markets, allowing testing of position-sizing approaches that would require substantial real capital.

Transforming Testing Through Algorithmic Automation

The automation capabilities matter most here. You can configure algorithmic strategies, define entry and exit rules, set risk parameters, and watch how those bots behave across different market conditions. This transforms testing from "can I execute this trade correctly" to "does this systematic approach generate consistent returns."

According to industry research published by FXReplay, 90% of retail traders lose money, largely because they lack systematic validation before deploying capital. Gainium addresses this by making bot testing the core function rather than an advanced feature. The platform assumes you're building toward automation, not just practicing manual trades.

Uncovering Market Regime Dependencies Through Testing

The repeated testing cycle reveals patterns that single trades never expose. A strategy might work brilliantly during trending markets and fail completely during consolidation. Running it across multiple simulated weeks surfaces these regime dependencies before you discover them with real money.

3. Bitsgap

Bitsgap replicates multi-exchange trading environments, supporting demo accounts across more than fifteen cryptocurrency exchanges. This matters when your strategy depends on specific exchange characteristics like:

- Order book depth

- Typical spread costs

- Available trading pairs

Bitsgap provides simulated balances equivalent to one Bitcoin plus USDT, enabling portfolio strategies that involve multiple assets and position types. You can test how the correlation between assets affects overall portfolio volatility, or whether diversification across exchanges reduces execution risk.

Automating Range-Bound Strategies

Grid trading features let traders automate range-bound strategies that profit from price oscillation rather than directional movement. Testing these approaches in simulation reveals whether the strategy generates sufficient profit per cycle to cover trading fees and whether typical volatility provides sufficient price movement to trigger grid levels.

The Progression From Multi-Exchange Simulation to Automated Bot Execution

The multi-exchange simulation exposes execution differences that single-platform testing misses. An arbitrage strategy might look profitable in theory, but simulation across actual exchange interfaces reveals whether price discrepancies persist long enough to execute both legs, or whether they vanish before your orders fill.

Most traders eventually move beyond manual simulation toward systematic execution. Platforms like Coincidence AI bridge this gap by deploying validated strategies as AI-powered bots that execute across multiple exchanges with the consistency that manual trading can't maintain. The simulator proves the strategy works. Automation ensures it runs without hesitation, fatigue, or emotional interference that erodes edges during manual execution.

4. Cryptohopper

Cryptohopper centers entirely on algorithmic strategy development. The platform provides approximately 100,000 units of virtual balance and supports testing across major cryptocurrencies, but the interface assumes you're building bots, not practicing discretionary trades.

The strategy marketplace creates a testing laboratory for prebuilt approaches. You can select strategies designed by other traders, run them through simulation, and measure performance before deploying capital. This accelerates the learning curve by exposing you to systematic approaches you wouldn't have discovered on your own.

Rule-Based System Design

Indicator-based strategy configuration lets you combine technical signals into rule-based systems. You define:

- When the bot enters positions

- How it sizes trades

- Where it places stops

- Under what conditions does it exits

The simulation reveals whether those rules generate positive expectancy or just create the illusion of a system.

Scaling Through Automation

The platform's focus on automation reflects a broader truth about the evolution of trading. Manual execution works for learning mechanics, but systematic approaches scale better. Once you validate that a specific setup generates consistent returns, automating its execution removes the psychological barriers that prevent consistent implementation.

5. Phemex Mock Trading

Phemex provides a futures trading simulator that mirrors its live platform interface. The environment starts instantly without requiring full identity verification, removing friction from the testing process.

Large simulated USDT balances enable leveraged position testing without the capital requirements that make futures trading inaccessible for many new traders. You can experiment with 10x leverage, measure how position size affects liquidation risk, and learn how funding rates impact holding costs, all without exposure to actual liquidation.

The Practical Benefits of Interface Familiarity and Leveraged Market Simulation

The interface replication matters more than it initially appears. When you eventually trade with real capital, the transition feels seamless because every button, order type, and risk management tool already feels familiar. You're not learning a new platform under the pressure of live positions. You're executing in an environment you've already navigated dozens of times.

Futures markets behave differently from spot markets. Leverage amplifies both gains and losses, funding rates create holding costs that don't exist in spot trading, and liquidation risk introduces a failure mode that spot positions never face. Simulation lets you encounter these dynamics without the financial consequences that make leveraged learning so expensive.

6. Bitcoin Flip

Bitcoin Flip simplifies the simulation to the basic mechanics of trading. The mobile application provides a streamlined interface where users practice buying and selling Bitcoin with simulated funds, stripping away complexity to focus on fundamental market behavior.

Developing Market Intuition

New traders aren't confronted with dozens of order types, leverage options, and technical indicators. They see price movement and make directional decisions, building basic intuition about market timing before adding complexity. This works best as an entry point rather than a comprehensive testing environment. You learn that:

- Prices move

- Timing affects outcomes

- Emotional reactions influence decisions

But you don't gain the systematic validation needed before deploying real capital across more sophisticated strategies.

The limitation becomes the lesson. Once basic mechanics feel comfortable, the need for deeper testing becomes obvious. You've learned how to place trades. Now you need to learn whether your trading decisions generate consistent returns across different market conditions.

7. OKX Demo Trading

OKX replicates its full exchange environment in demo mode. Users receive approximately $10,000 in a refillable simulated balance and access the same interface used for live trading, including:

- Spot markets

- Derivatives,

- Complete range of order types

Interface fidelity provides the most realistic preparation for actual exchange trading. Every feature you'll use with real capital exists in the demo environment. You learn where liquidation warnings appear, how to set conditional orders, and where to find historical trade data, all without financial risk.

The Integration of Continuous Capital Access and Real-Time Market Data Realism

The refillable balance removes a common simulation problem. Many platforms provide limited virtual capital that, once depleted, requires waiting periods or account resets. OKX lets you refill instantly, maintaining continuous access to testing regardless of simulated performance.

The realism extends to market data. You're not trading against simplified price feeds or delayed data. The demo environment connects to live market data, exposing you to actual volatility, spread costs, and order-book dynamics. Your simulated trades execute against real market conditions, just without actual capital at risk.

The Missing Layer Most Simulators Ignore

Even with access to simulated trading environments, many traders still struggle to answer the most important question in strategy development: what strategy should they actually test? Most crypto trading simulators assume traders already know the exact rules they want to evaluate.

The Strategy Design Burden

The platforms provide an environment for simulated trading, but they leave the responsibility of designing a strategy entirely to the user. In reality, strategy creation is one of the hardest parts of trading. Before a strategy can be tested, traders must define multiple components. They must:

- Determine entry rules

- Define exit conditions

- Choose indicators

- Select timeframes

- Adjust parameters

- Evaluate whether the results are statistically meaningful

This process is far more complex than simply placing trades.

The Institutional Standard

Professional trading firms address this challenge through systematic research. Strategies are typically backtested on large datasets before capital is deployed, allowing researchers to evaluate how the system performs under different market conditions. The scale of testing involved is often far beyond what individual traders perform manually.

The Strategy Development Gap

The critical failure point isn't execution. It's the step before execution, where traders must transform market observations into testable rules. A trader notices that Bitcoin tends to rally after sharp drops. That observation feels like insight, but it isn't a strategy yet.

- How sharp does the drop need to be?

- Over what timeframe?

- Does the pattern work across all volatility regimes, or only during specific market structures?

- What constitutes a rally worth trading?

- Where should the exit occur?

The Necessity of Sample Size

Answering these questions requires systematic testing across hundreds of historical occurrences. You need to measure whether a 10% drop produces better subsequent returns than a 5% drop. You need to test whether the pattern holds across:

- One-hour

- Four-hour

- Daily timeframes

You need to determine whether the edge persists during bear markets, bull markets, and sideways consolidation.

The Fragmented Workflow Trap

Most traders approach this process manually. They experiment with indicators, run isolated simulations, and adjust rules based on recent results. The workflow is fragmented. They might test a moving average crossover for a week, switch to RSI signals the next week, then try combining both without ever collecting enough data to determine whether either approach generates consistent returns.

When Manual Testing Breaks Down

The pattern surfaces constantly in trading communities. Maintaining the data and analytics layer is harder than execution itself. Building indicator calculations and data pipelines from scratch becomes a significant burden for individual developers who just want to test whether their trading idea has merit.

Someone spends months designing rules to avoid bad trades, then struggles psychologically when those rules result in no activity. The system sits idle for days, and its brain starts questioning everything:

- Is the strategy broken?

- Is it correctly avoiding setups that don't meet the criteria?

Distinguishing between healthy system silence and genuinely broken strategies is harder than expected.

Bridging the Structural Gap

Psychological challenge reveals a deeper structural problem. Traders need tools that help them build strategies systematically, not just execute them. They need environments that make iteration and experimentation easier by separating analytics from execution, allowing rapid testing of different rule combinations without rebuilding infrastructure each time.

The Backtesting Infrastructure Problem

Individual traders rarely have access to tools that make comprehensive strategy testing simple. Professional quant funds use sophisticated backtesting platforms that evaluate strategies across thousands of historical trades, measuring statistical significance before any capital gets deployed.

According to research from Ernest Chan, quantitative trader and author of Algorithmic Trading, strategies are typically evaluated across thousands of historical trades during backtesting to determine whether a statistical edge exists before live deployment. This level of rigor distinguishes systematic trading from pattern-recognition gambling.

The Pre-Defined Rule Constraint

Most crypto trading simulators don't provide this capability. They let you practice placing trades against live or recent price data, but they don't help you design the strategy in the first place. You're expected to arrive with a complete set of rules already defined, ready to test them manually.

The Necessity of Systematic Data Testing Over Manual Paper Trading

The gap becomes obvious when you consider what traders actually need:

- They need to test whether momentum strategies outperform mean-reversion strategies for their chosen assets.

- They need to compare different indicator combinations to see which produces the highest risk-adjusted returns.

- They need to measure how performance changes as they adjust position-sizing rules or exit timing.

These questions can't be answered through manual paper trading. They require running systematic tests across large datasets, measuring consistency, and isolating which strategy elements actually drive performance versus which just fit historical noise.

From Simulation to Systematic Validation

The missing layer is the bridge between market observation and testable strategy. Traders need tools that help them transform intuition into rules, then validate those rules across sufficient data to determine statistical reliability. Once a strategy proves itself through systematic testing, the natural progression isn't more manual simulation. It's automation.

Platforms like Coincidence AI let traders deploy validated strategies as AI-powered bots that execute across multiple exchanges without requiring constant manual intervention. The simulator becomes the proving ground where strategies earn the right to run autonomously, and automation becomes the tool that executes those proven approaches with the consistency impossible to maintain through manual trading.

The Design-Execution Disconnect

Most simulators stop at execution practice, leaving traders to design their own strategy.

- They simulate trades without helping traders decide which trades to simulate.

- They provide realistic market conditions without the analytical framework needed to assess whether a strategy actually works under those conditions.

This creates the final gap in most crypto trading simulators. They simulate trades, but they don't help traders build and validate strategies efficiently, which is the step that ultimately determines whether a trading idea deserves to be used in live markets.

How Coincidence AI Helps Traders Simulate Crypto Strategies

The challenge most simulators leave unresolved is the development of strategy. Traders are expected to arrive with fully defined rules before testing begins, even though defining those rules is often the hardest part of the process. Coincidence AI approaches crypto simulation from a different direction. Instead of starting with manual trades or predefined strategies, the platform allows traders to describe a strategy in plain English.

A trader can write a simple instruction, such as "Buy BTC when the 50 day moving average crosses above the 200 day moving average," or describe a momentum or breakout rule using natural language.

Coincidence AI converts that description into a structured trading strategy and immediately runs a historical backtest. This allows traders to see how the idea would have performed across past market data without manually coding indicators, building spreadsheets, or configuring complex trading logic.

From Language to Logic

The translation happens automatically. What would typically require programming knowledge or hours of indicator configuration becomes a single sentence. The system interprets the intent, constructs the technical rules, and applies them across historical price data. This removes the infrastructure burden that prevents most traders from beginning.

- You don't need to understand how to calculate a moving average programmatically.

- You don't need to build data pipelines or write conditional statements.

The technical implementation happens behind the scenes while you focus on whether the strategy concept has merit.

Accelerating the Feedback Loop

Speed matters as much as simplicity. Traditional backtesting requires:

- Setting up environments

- Importing data

- Coding logic

- Debugging errors

- Running tests

That process can take hours or days. Here, it takes seconds. You describe the idea, and the platform shows you whether it works.

Performance Validation

Once the backtest runs, the platform provides key performance insights that help traders evaluate whether the strategy has a viable statistical foundation. Win rate, maximum drawdown, total return, average trade duration, and risk-adjusted metrics appear immediately. These numbers answer the questions that matter before capital deployment.

- Does the strategy maintain positive expectancy across different market conditions?

- What's the worst drawdown you should expect?

- How many consecutive losses typically occur before the next winner?

Traders can review historical performance not just as a single profit number, but as a series of trades showing when the strategy worked, when it failed, and under what conditions each outcome occurred. This granular view reveals whether the edge is consistent or whether a few lucky trades created the illusion of profitability.

Rapid Iteration

Because the strategy is generated as a structured system, traders can quickly refine their ideas. Entry conditions, exit rules, or indicator parameters can be modified and tested again, allowing users to iterate on strategies much faster than traditional manual testing workflows.

Iterative Strategy Refinement

A trader might test a breakout strategy with a 5 percent threshold, see mediocre results, adjust to 7%, and immediately measure how performance changes. They can compare different stop-loss distances, experiment with trailing exits versus fixed targets, or test whether adding a volume filter improves results. This experimentation cycle surfaces which strategy elements actually drive performance.

Often, traders discover that specific parameter values matter less than the broader market regime. A momentum approach might work across any reasonable setting during trending periods and fail across all of them during consolidation. That insight changes everything about deployment timing.

The Infrastructure Burden

The pattern that emerges across communities is clear. Maintaining the data and analytics layer is harder than execution itself. Building indicator calculations and data pipelines from scratch becomes a significant burden for individual developers who just want to test whether their trading idea has merit.

Platforms that handle this infrastructure allow traders to focus on strategy logic rather than technical implementation.

From Validation to Execution

Once a strategy demonstrates consistent results, it can be deployed automatically to supported exchanges such as Bybit and KuCoin, allowing the system to execute trades without constant manual monitoring. This progression transforms the simulator's role. Instead of serving primarily as a place to practice trades, the platform becomes a strategy-validation environment where traders can test, refine, and deploy systematic trading ideas.

The shift matters because manual execution introduces the very inconsistencies that systematic testing is designed to eliminate. A trader validates that a specific setup generates positive expectancy across 200 historical occurrences, then fails to execute it consistently because they:

- Hesitate during volatile moments

- Misses setups during sleep hours

- Override rules when recent losses create emotional pressure

The Role of Automation in Eliminating Psychological Interference and Systematic Deployment

Automation removes that gap. The same logic that proved itself through backtesting runs continuously, monitoring markets and executing when conditions match, without the psychological interference that undermines manual implementation.

If you are searching for the best crypto trading simulator, the most valuable capability is not simulated trades. It is the ability to determine whether a strategy actually works before risking capital, then deploy it systematically once validation is complete.

Trade With Plain English With Our AI Crypto Trading Bot

Try Coincidence AI and describe a trading idea in plain English. Your first simulation will generate a historical backtest showing how the strategy would have performed across past crypto market conditions, giving you immediate insight into whether the idea is worth deploying.

The barrier between having a trading idea and knowing whether it works shouldn't require programming skills, spreadsheet engineering, or weeks of manual testing. Most traders abandon promising strategies not because the concepts are flawed, but because the validation process feels inaccessible.

Accelerating Strategy Development Through Instant Feedback

When you can express a hypothesis as simply as "buy when price breaks above the 20-day high with volume confirmation," and receive performance data seconds later, strategy development becomes something you can do repeatedly rather than occasionally. This approach transforms how quickly you learn what works. Instead of spending a month testing one idea through manual simulation, you can evaluate ten different approaches in an afternoon.

Each backtest reveals not just whether the strategy made money historically, but also how it behaved across different volatility regimes, what its typical drawdown looked like, and whether the edge is consistent or tied to specific market conditions that may not repeat.

Automated Deployment for Consistent Strategy Execution

Once you find a strategy that demonstrates reliable performance on historical data, Coincidence AI automatically deploys it to exchanges like Bybit and KuCoin. The same logic that passes validation runs continuously without requiring you to:

- Monitor charts

- Overcome hesitation during volatile moments

- Maintain discipline through losing streaks

The gap between knowing something works and executing it consistently disappears when the system handles implementation.

Related Reading

Humza Sami

CTO CoincidenceAI